Updated 7/29/2020

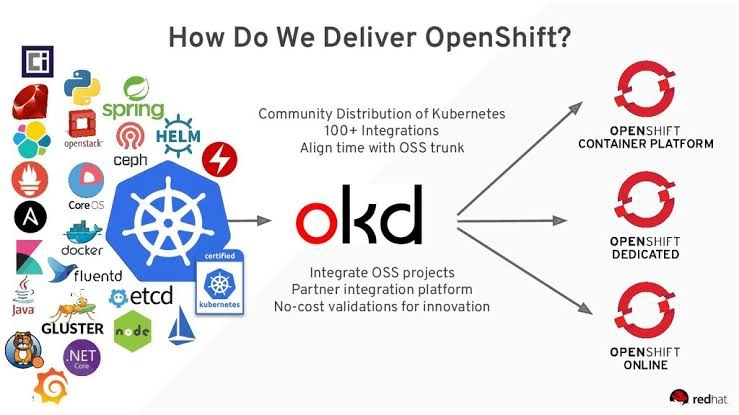

OKD is the upstream and community-supported version of the Red Hat OpenShift Container Platform (OCP). OpenShift expands vanilla Kubernetes into an application platform designed for enterprise use at scale. Starting with the release of OpenShift 4, the default operating system is Red Hat CoreOS, which provides an immutable infrastructure and automated updates. Fedora CoreOS, like OKD, is the upstream version of Red Hat CoreOS.

You can experience OpenShift in your home lab by using the open-source upstream combination of OKD and FCOS (Fedora CoreOS) to build your cluster. Used hardware for a home lab that can run an OKD cluster is relatively inexpensive these days ($250–$350), especially when compared to a cloud-hosted solution costing over $250 per month.

The purpose of this guide is to help you successfully build an OKD 4.5 cluster so that you can develop and learn to use OpenShift and OKD.

NOTE: In this guide, I use VMWare ESXi as the hypervisor, but you could use Hyper-V, libvirt, Proxmox, VirtualBox, or even bare metal instead.

VM Overview:

For my installation, I used an ESXi 6.5 host with 96GB of RAM and a separate VLAN configured for OKD. Here is a breakdown of the virtual machines:

Create a new network in VMWare for OKD:

Login to your VMWare Host. Select Networking → Port Groups → Add port group. Setup an OKD network on an unused VLAN, in my instance, VLAN 20.

Name your Group and set your VLAN ID.

Create the okd4-services VM:

Download the CentOS 8 ISO DVD. Example: CentOS-8.2.2004-x86_64-dvd1.iso and upload it to your ESXi host datastore.

Create a new Virtual Machine. Choose Guest OS as Linux and Select CentOS 7 (64-bit).

Select the Datastore and customize the settings to 4 vCPU, 4GB RAM, 100GB HD. Add a 2nd network adapter to the OKD network. Attached the CentOS ISO.

Review your settings and click Finish.

Install CentOS 8 on the okd4-services VM:

Run through the CentOS 8 installation.

I prefer to use the “Standard Partition” storage configuration without mounting storage for /home. On the “Installation Destination” page, click on Custom under Storage Configuration, then Done.

On the Manual Partitioning page, select Standard Partition, then click “Click Here to Create them automatically.”

Select the “/home” partition and click the “-” to delete it.

Remove the contents of the “Desired Capacity” field, so it is blank and click Done, then Accept the changes.

For Software Selection, use Server with GUI and add the Guest Agents.

Enable the NIC connected to the VM Network and set the hostname as okd4-services, then click Apply and Done.

Click “Begin Installation” to start the install.

Set the Root password, and create an admin user.

After the installation has completed, login, and update the OS.

sudo dnf install -y epel-release

sudo dnf update -y

sudo systemctl restart

Setup XRDP for Remote Access from Home Network

sudo dnf install -y xrdp tigervnc-server

sudo systemctl enable --now xrxp

sudo firewall-cmd --zone=public --permanent --add-port=3389/tcp

sudo firewall-cmd --reload

Download Google Chrome rpm and install along with git

sudo dnf install -y ~/Downloads/google-chrome-stable_current_x86_64.rpm git

Create the okd4-pfsense VM:

Download the pfSense ISO and upload it to your ESXi host’s datastore.

Create a new Virtual Machine. Choose Guest OS as Other and Select FreeBSD 64-bit.

Use the default template settings for resources.

Select your home network for Network Adapter 1, and add a new network adapter using the OKD network.

Setup the okd4-pfsense VM:

Power on your pfSense VM and run through the installation using all the default values. After completion your VM console should look like this:

Login to pfSense via your web-browser on the okd4-services VM. The default username is “admin” and the password is “pfsense”.

After logging in, click next and use “okd4-pfsense” for hostname and “okd.local” for the domain and add 192.168.1.210 as the Primary DNS server.

Select your Timezone. Next.

Use Defaults for WAN Configuration. Uncheck “Block RFC1918 Private Networks” since your home network is the “WAN” in this setup. Next.

Use the default LAN IP and subnet mask. Set an admin password on the next screen.

Create bootstrap, master, and worker nodes:

Download the Fedora CoreOS Bare Metal ISO and upload it to your ESXi datastore.

The latest stable version at the time of writing is 32.20200715.3.0

Create the six ODK nodes (bootstrap, master, worker) on your ESXi host using the values in the spreadsheet at the beginning of this post:

You should end up with the following VMs:

Setup DHCP reservations:

Compile a list of the OKD nodes MAC addresses by viewing the hardware configuration of your VMs.

Login into pfSense. Go to Services → DHCP Server and change your ending range IP to 192.168.1.99, and set the primary DNS server as 192.168.1.210, then click Save.

On the DHCP Server, page click Add at the bottom.

Fill in the MAC Address, IP Address, and Hostname, then click save. Do this for each ODK VM. Also, include the okd4-services MAC on the OKD network while you are at it. Click Apply Changes at the top of the page when complete.S

Configure okd4-services VM to host various services:

The okd4-services VM is used to provide DNS, NFS exports, web server, and load balancing.

Copy the MAC address on the VM Hardware configuration page for the NIC connected to the OKD network and set up a DHCP Reservation for this VM using the IP address 192.168.1.210.

Hit “Apply Changes” at the top of the DHCP page when completed.

Open a terminal on the okd4-services VM and clone the okd4_files repo that contains the DNS, HAProxy, and install-conf.yaml example files:

cd

git clone https://github.com/cragr/okd4_files.git

cd okd4_files

Install bind (DNS)

sudo dnf -y install bind bind-utils

Copy the named config files and zones:

sudo cp named.conf /etc/named.conf

sudo cp named.conf.local /etc/named/

sudo mkdir /etc/named/zones

sudo cp db* /etc/named/zones

Enable and start named:

sudo systemctl enable named

sudo systemctl start named

sudo systemctl status named

Create firewall rules:

sudo firewall-cmd --permanent --add-port=53/udp

sudo firewall-cmd --reload

Change the DNS on the okd4-service NIC that is attached to the VM Network (not OKD) to 127.0.0.1.

Restart the network services on the okd4-services VM:

sudo systemctl restart NetworkManager

Test DNS on the okd4-services.

dig okd.local

dig –x 192.168.1.210

With DNS working correctly, you should see the following results:

Install HAProxy:

sudo dnf install haproxy -y

Copy haproxy config from the git okd4_files directory :

sudo cp haproxy.cfg /etc/haproxy/haproxy.cfg

Start, enable, and verify HA Proxy service:

sudo setsebool -P haproxy_connect_any 1

sudo systemctl enable haproxy

sudo systemctl start haproxy

sudo systemctl status haproxy

Add OKD firewall ports:

sudo firewall-cmd --permanent --add-port=6443/tcp

sudo firewall-cmd --permanent --add-port=22623/tcp

sudo firewall-cmd --permanent --add-service=http

sudo firewall-cmd --permanent --add-service=https

sudo firewall-cmd --reload

Install Apache/HTTPD

sudo dnf install -y httpd

Change httpd to listen port to 8080:

sudo sed -i 's/Listen 80/Listen 8080/' /etc/httpd/conf/httpd.conf

Enable and Start httpd service/Allow port 8080 on the firewall:

sudo setsebool -P httpd_read_user_content 1

sudo systemctl enable httpd

sudo systemctl start httpd

sudo firewall-cmd --permanent --add-port=8080/tcp

sudo firewall-cmd --reload

Test the webserver:

curl localhost:8080

A successful curl should look like this:

Congratulations, You Are Half Way There!

Congrats! You should now have a separate home lab environment setup and ready for ODK. Now we can start the install.

Download the openshift-installer and oc client:

SSH to the okd4-services VM

To download the latest oc client and openshift-install binaries, you need to use an existing version of the oc client.

Download the 4.5 version of the oc client and openshift-install from the OKD releases page. Example:

cd

wget https://github.com/openshift/okd/releases/download/4.5.0-0.okd-2020-07-29-070316/openshift-client-linux-4.5.0-0.okd-2020-07-29-070316.tar.gz

wget https://github.com/openshift/okd/releases/download/4.5.0-0.okd-2020-07-29-070316/openshift-install-linux-4.5.0-0.okd-2020-07-29-070316.tar.gz

Extract the okd version of the oc client and openshift-install:

tar -zxvf openshift-client-linux-4.5.0-0.okd-2020-07-29-070316.tar.gz

tar -zxvf openshift-install-linux-4.5.0-0.okd-2020-07-29-070316.tar.gz

Move the kubectl, oc, and openshift-install to /usr/local/bin and show the version:

sudo mv kubectl oc openshift-install /usr/local/bin/

oc version

openshift-install version

The latest and recent releases are available at https://origin-release.svc.ci.openshift.org

Setup the openshift-installer:

In the install-config.yaml, you can either use a pull-secret from RedHat or the default of “{“auths”:{“fake”:{“auth”: “bar”}}}” as the pull-secret.

Generate an SSH key if you do not already have one.

ssh-keygen

Create an install directory and copy the install-config.yaml file:

cd

mkdir install_dir

cp okd4_files/install-config.yaml ./install_dir

Edit the install-config.yaml in the install_dir, insert your pull secret and ssh key, and backup the install-config.yaml as it will be deleted in the next step:

vim ./install_dir/install-config.yaml

cp ./install_dir/install-config.yaml ./install_dir/install-config.yaml.bak

Generate the Kubernetes manifests for the cluster, ignore the warning:

openshift-install create manifests --dir=install_dir/

Modify the cluster-scheduler-02-config.yaml manifest file to prevent Pods from being scheduled on the control plane machines:

sed -i 's/mastersSchedulable: true/mastersSchedulable: False/' install_dir/manifests/cluster-scheduler-02-config.yml

Now you can create the ignition-configs:

openshift-install create ignition-configs --dir=install_dir/

Note: If you reuse the install_dir, make sure it is empty. Hidden files are created after generating the configs, and they should be removed before you use the same folder on a 2nd attempt.

Host ignition and Fedora CoreOS files on the webserver:

Create okd4 directory in /var/www/html:

sudo mkdir /var/www/html/okd4

Copy the install_dir contents to /var/www/html/okd4 and set permissions:

sudo cp -R install_dir/* /var/www/html/okd4/

sudo chown -R apache: /var/www/html/

sudo chmod -R 755 /var/www/html/

Test the webserver:

curl localhost:8080/okd4/metadata.json

Download the Fedora CoreOS bare-metal bios image and sig files and shorten the file names:

cd /var/www/html/okd4/

sudo wget https://builds.coreos.fedoraproject.org/prod/streams/stable/builds/32.20200715.3.0/x86_64/fedora-coreos-32.20200715.3.0-metal.x86_64.raw.xz

sudo wget https://builds.coreos.fedoraproject.org/prod/streams/stable/builds/32.20200715.3.0/x86_64/fedora-coreos-32.20200715.3.0-metal.x86_64.raw.xz.sig

sudo mv fedora-coreos-32.20200715.3.0-metal.x86_64.raw.xz fcos.raw.xz

sudo mv fedora-coreos-32.20200715.3.0-metal.x86_64.raw.xz.sig fcos.raw.xz.sig

sudo chown -R apache: /var/www/html/

sudo chmod -R 755 /var/www/html/

Starting the bootstrap node:

Power on the odk4-bootstrap VM. Press the TAB key to edit the kernel boot options and add the following:

coreos.inst.install_dev=/dev/sda coreos.inst.image_url=http://192.168.1.210:8080/okd4/fcos.raw.xz coreos.inst.ignition_url=http://192.168.1.210:8080/okd4/bootstrap.ign

You should see that the fcos.raw.gz image and signature are downloading:

Starting the control plane nodes:

Power on the control-plane nodes and press the TAB key to edit the kernel boot options and add the following, then press enter:

coreos.inst.install_dev=/dev/sda coreos.inst.image_url=http://192.168.1.210:8080/okd4/fcos.raw.xz coreos.inst.ignition_url=http://192.168.1.210:8080/okd4/master.ign

You should see that the fcos.raw.gz image and signature are downloading:

Starting the compute nodes:

Power on the control-plane nodes and press the TAB key to edit the kernel boot options and add the following, then press enter:

coreos.inst.install_dev=/dev/sda coreos.inst.image_url=http://192.168.1.210:8080/okd4/fcos.raw.xz coreos.inst.ignition_url=http://192.168.1.210:8080/okd4/worker.ign

You should see that the fcos.raw.gz image and signature are downloading:

It is usual for the worker nodes to display the following until the bootstrap process complete:

Monitor the bootstrap installation:

You can monitor the bootstrap process from the okd4-services node:

openshift-install --dir=install_dir/ wait-for bootstrap-complete --log-level=info

Once the bootstrap process is complete, which can take upwards of 30 minutes, you can shutdown your bootstrap node. Now is a good time to edit the /etc/haproxy/haproxy.cfg, comment out the bootstrap node, and reload the haproxy service.

sudo sed '/ okd4-bootstrap /s/^/#/' /etc/haproxy/haproxy.cfg

sudo systemctl reload haproxy

Login to the cluster and approve CSRs:

Now that the masters are online, you should be able to login with the oc client. Use the following commands to log in and check the status of your cluster:

export KUBECONFIG=~/install_dir/auth/kubeconfig

oc whoami

oc get nodes

oc get csr

You should only see the master nodes and several CSR’s waiting for approval. Install the jq package to assist with approving multiple CSR’s at once time.

wget -O jq https://github.com/stedolan/jq/releases/download/jq-1.6/jq-linux64

chmod +x jq

sudo mv jq /usr/local/bin/

jq --version

Approve all the pending certs and check your nodes:

oc get csr -ojson | jq -r '.items[] | select(.status == {} ) | .metadata.name' | xargs oc adm certificate approve

Check the status of the cluster operators.

oc get clusteroperators

The console has just become available in my picture above. Get your kubeadmin password from the install_dir/auth folder and login to the web console:

cat install_dir/auth/kubeadmin-password

Open your web browser to https://console-openshift-console.apps.lab.okd.local/ and login as kubeadmin with the password from above:

The cluster status may still say upgrading, and it continues to finish the installation.

Persistent Storage:

We need to create some persistent storage for our registry before we can complete this project. Let’s configure our okd4-services VM as an NFS server and use it for persistent storage.

Login to your okd4-services VM and begin to set up an NFS server. The following commands install the necessary packages, enable services, and configure file and folder permissions.

sudo dnf install -y nfs-utils

sudo systemctl enable nfs-server rpcbind

sudo systemctl start nfs-server rpcbind

sudo mkdir -p /var/nfsshare/registry

sudo chmod -R 777 /var/nfsshare

sudo chown -R nobody:nobody /var/nfsshare

Create an NFS Export

Add this line in the new /etc/exports file “/var/nfsshare 192.168.1.0/24(rw,sync,no_root_squash,no_all_squash,no_wdelay)”

echo '/var/nfsshare 192.168.1.0/24(rw,sync,no_root_squash,no_all_squash,no_wdelay)' | sudo tee /etc/exports

Restart the nfs-server service and add firewall rules:

sudo setsebool -P nfs_export_all_rw 1

sudo systemctl restart nfs-server

sudo firewall-cmd --permanent --zone=public --add-service mountd

sudo firewall-cmd --permanent --zone=public --add-service rpc-bind

sudo firewall-cmd --permanent --zone=public --add-service nfs

sudo firewall-cmd --reload

Registry configuration:

Create a persistent volume on the NFS share. Use the registry_py.yaml in okd4_files folder from the git repo:

oc create -f okd4_files/registry_pv.yaml

oc get pv

Edit the image-registry operator:

oc edit configs.imageregistry.operator.openshift.io

Change the managementState: from Removed to Managed. Under storage: add the pvc: and claim: blank to attach the PV and save your changes automatically:

managementState: Managedstorage: pvc: claim:

Check your persistent volume, and it should now be claimed:

oc get pv

Check the export size, and it should be zero. In the next section, we will push to the registry, and the file size should not be zero.

du -sh /var/nfsshare/registry

In the next section, we will create a WordPress project and push it to the registry. After the push, the NFS export should show 200+ MB.

Create WordPress Project:

Create a new OKD project.

oc new-project wordpress-test

Create a new app using the centos php73 s2i image from docker hub and use the WordPress GitHub repo for the source. Expose the service to create a route.

oc new-app centos/php-73-centos7~https://github.com/WordPress/WordPress.git

oc expose svc/wordpress

Create a new app using the centos7 MariaDB image with some environment variables:

oc new-app centos/mariadb-103-centos7 --name mariadb --env MYSQL_DATABASE=wordpress --env MYSQL_USER=wordpress --env MYSQL_PASSWORD=wordpress

Open the OpenShift console and browse to the WordPress-test project. Once the WordPress image is built and ready, it will be dark blue like the MariaDB instance show here:

Click on the WordPress object and click on the route to open it in your web browser:

You should see the WordPress setup config, click Let’s Go.

Fill in the database, username, password, and database host as pictured and run the installation:

Fill out the welcome information and click Install WordPress.

Log in, and you should have a working WordPress installation:

Check the size of your NFS export on the okd4-services VM. It should be around 300MB in size.

du -sh /var/nfsshare/registry/

You have just verified your persistent volume is working.

HTPasswd Setup:

The kubeadmin is a temporary user. The easiest way to set up a local user is with htpasswd.

cd

cd okd4_files

htpasswd -c -B -b users.htpasswd testuser testpassword

Create a secret in the openshift-config project using the users.htpasswd file you generated:

oc create secret generic htpass-secret --from-file=htpasswd=users.htpasswd -n openshift-config

Add the identity provider.

oc apply -f htpasswd_provider.yaml

Logout of the OpenShift Console. Then select htpasswd_provider and login with testuser and testpassword credentials.

If you visit the Administrator page you should see no projects:

Give yourself cluster-admin access, and the projects should immediately populate:

oc adm policy add-cluster-role-to-user cluster-admin testuser

Your user should now have cluster-admin level access:

Congrats! You have created an OKD Cluster!

Hopefully, you have created an OKD cluster and learned a few things along the way. At this point, you should have a decent basis to tinker with OpenShift continue to learn.

Here are some resources available to help you along your journey:

To report issues, use the OKD Github Repo: https://github.com/openshift/okd

For support check out the #openshift-users channel on k8s Slack

The OKD Working Group meets bi-weekly to discuss the development and next steps. The meeting schedule and location are tracked in the openshift/community repo.

Google group for okd-wg: https://groups.google.com/forum/#!forum/okd-wg